| Statistic | Value |

|---|---|

| Minimum | 0.025 |

| 1st Quartile | 0.037 |

| Median | 0.040 |

| Mean | 0.043 |

| 3rd Quartile | 0.049 |

| Maximum | 0.070 |

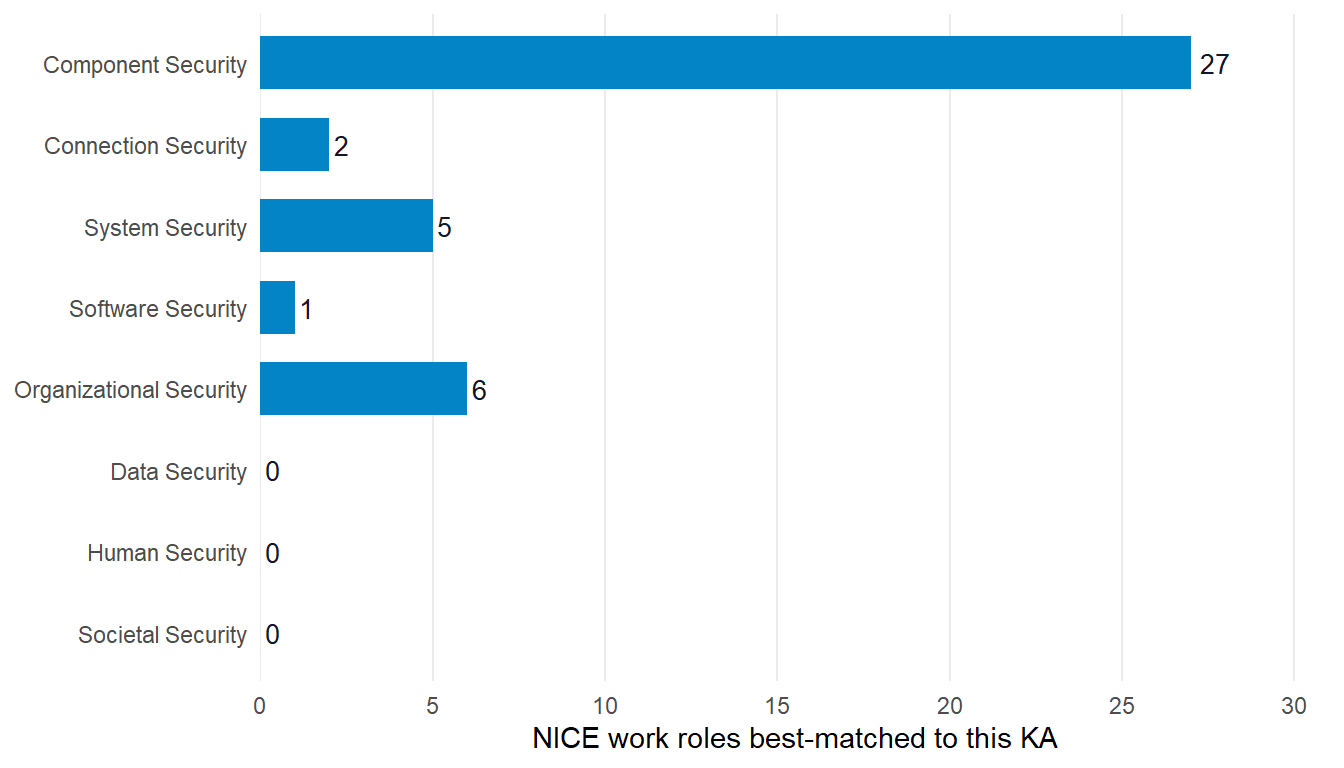

Where do NICE work roles align with CSEC2017 Knowledge Areas?

Cross-framework similarity at the role-to-curriculum-area level

The question

For each of the 41 NICE work roles, what is the closest CSEC2017 Knowledge Area by full-document text similarity, and how does that similarity distribute across the 8 KAs?

Why it matters

Both NICE and CSEC2017 are widely-referenced US cybersecurity frameworks with overlapping audiences. CAE designation files cite both. ABET cybersecurity program criteria align loosely to both. Federal and academic policy discussions invoke both. Practitioners frequently ask whether a NICE-aligned curriculum implicitly addresses CSEC2017 KAs, or whether mapping between the two is straightforward.

The frameworks were designed for different purposes. NICE specifies work roles defined by the tasks, knowledge, and skills a person in the position performs. CSEC2017 specifies curricular Knowledge Areas defined by what a cyber program teaches. They sit at different abstraction layers and use different vocabularies on purpose.

This query asks whether that purpose-difference shows up in vocabulary overlap.

The result

Every best-match similarity sits below 0.10. The strongest pairing in the entire 41-role set is roughly 7% vocabulary overlap. The median is 4%.

Of 41 NICE work roles, 27 (66%) best-match to Component Security. The remaining 14 distribute across four KAs (Organizational, System, Connection, Software). Three KAs attract zero NICE roles as top match.

What this tells us

The two frameworks are not text-aligned

A best-match similarity ceiling of 0.07 across all 41 NICE roles is the structural finding. Even the highest-overlap pairs share fewer than one in fourteen unique tokens. The vocabulary similarity is too low to support any defensible NICE-to-CSEC2017 crosswalking at the role-to-KA level.

Component Security dominance is a vocabulary-scale artifact

Component Security’s 8-Topic scope covers hardware, firmware, integrated systems, embedded design, and component testing. That vocabulary is broad enough to overlap weakly with any NICE role that mentions technology components. Other KAs use narrower, more specialized vocabulary. Human Security covers identity, social engineering, awareness. Societal Security covers policy, ethics, law. Neither overlaps with NICE workforce-position language regardless of the role’s semantic relationship to those topics.

Evidence of absence is useful

A practitioner considering a manual NICE-to-CSEC2017 crosswalk can use this result to decide not to do the work. The frameworks do not align at this layer because they were not designed to align at this layer. The right crosswalk for a NICE-aligned program is to the CAE Knowledge Unit set (NSA/CISA), not to CSEC2017 Knowledge Areas.

What this doesn’t tell us

Vocabulary similarity is not the only similarity that matters

NICE and CSEC2017 share many concepts at the semantic level (both cover incident response, cryptography, access control) but use different terminology and different framing. A NICE-aligned curriculum may genuinely address CSEC2017 Knowledge Areas through specific course content that doesn’t share much vocabulary with the NICE TKS statements. The text-similarity metric here can’t detect that alignment.

This is not a quality claim against either framework

Low vocabulary overlap reflects different design purposes, not different quality. NICE is excellent for workforce planning. CSEC2017 is excellent for curriculum architecture. Their inability to align at the vocabulary level is a structural feature of two well-designed frameworks pursuing different goals.

Different metrics produce different results

TF-IDF cosine similarity, embedding-based similarity, and topic-modeling approaches would each give different alignment estimates. Jaccard on unigrams is the simplest, most-interpretable choice. Downstream analysts can re-run on the same RDF graph with whatever metric suits their question.

Look up by NICE work role

The widget below shows each of the 41 NICE work roles with its top-3 CSEC2017 Knowledge Areas by full-document Jaccard. Search by role name (“incident,” “forensics,” “policy”) or sort by overlap percentage.

Rows group by NICE work role. Expand a role to see its top-3 CSEC2017 matches in similarity-descending order. The headline finding (every best match below 10% overlap) is visible in the data. Even the top-ranked match for any role rarely clears 7%.

Reproduce this

library(cybedtools)

library(dplyr)

library(stringr)

library(purrr)

library(tidyr)

library(rdflib)

g <- load_combined_ntriples_graph()

# Pull NICE work roles + CSEC2017 KAs.

units <- organizing_unit_framework_bindings(g)

nice_roles <- units |>

filter(grepl("^NICE", framework_name)) |>

select(unit, unit_name)

csec_kas <- units |>

filter(grepl("CSEC2017", framework_name)) |>

select(unit, unit_name)

# Build full-document text per unit (parent description + all child element text).

# Tokenize, drop stopwords and short tokens, compute Jaccard pairwise,

# keep the top 3 CSEC2017 KAs per NICE work role. Full prep script in

# `_data-prep-nice-csec2017-alignment.R` in the repository.Use case using this data

- Marcus uses this analysis to decide against a manual NICE-to-CSEC2017 crosswalk, the practitioner-facing version.