| Jurisdiction | Frameworks | Frameworks (n) | Total elements |

|---|---|---|---|

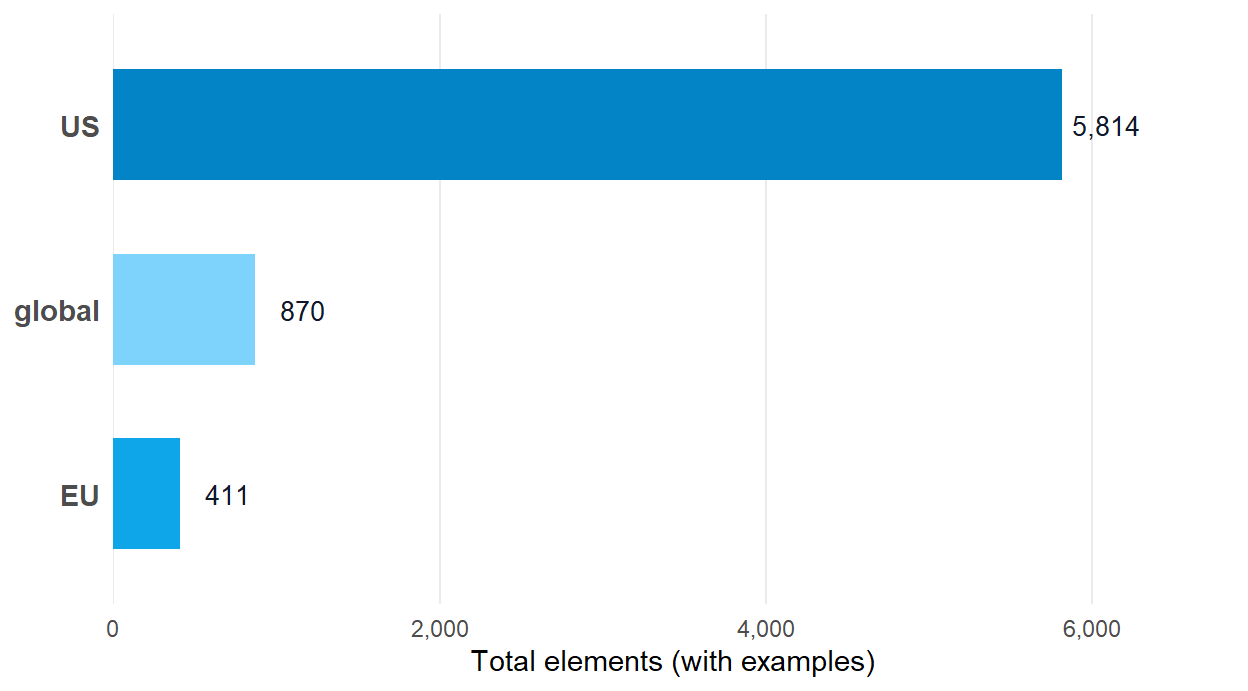

| US | NICE v2, DCWF v5.1, Cyber.org K-12 v1.0, CSTA K-12 CS (Rev 2017) | 4 | 5,814 |

| global | SFIA 9, ACM/IEEE CSEC2017 | 2 | 870 |

| EU | ECSF v1, DigComp 2.2 | 2 | 411 |

How is element coverage distributed across US and EU frameworks?

The 14-to-1 jurisdictional ratio, reframed

The question

How does total element coverage in cybedtools split across US, EU, and global frameworks, and what does the gap reflect?

Why it matters

Total element counts are an obvious cross-framework metric to reach for. They are also the easiest to misread. A reader who sees one jurisdiction’s element count vastly exceeding another’s may infer that the lagging jurisdiction is undercovered. The actual story is more often that the two jurisdictions chose different design philosophies for their workforce taxonomies.

The cybedtools corpus pulls four US frameworks (NICE, DCWF, Cyber.org K-12, CSTA), two EU frameworks (ENISA ECSF, DigComp 2.2), and two global frameworks (SFIA, ACM/IEEE CSEC2017). The element-count ratio between them is large and worth naming, but the framing matters more than the figure.

The result

The four US frameworks contribute 5,814 elements. The two EU frameworks contribute 411. That ratio runs at roughly 14.1 to one. Global frameworks add 870 more.

What this tells us

The ratio reflects design philosophy, not effort

NICE and DCWF were built to specify the US federal civilian and defense cyber workforces at hiring-pipeline granularity. The element count is the point. ECSF was authored as an EU-wide interoperability frame at profile granularity, deliberately light at the per-profile level. DigComp 2.2 specifies citizen-level digital competence, three nesting levels deep but deliberately compact. The frameworks were built to do different jobs.

Two materialization gaps sit on the EU side

ECSF profiles point at the European e-Competence Framework (e-CF 4.0) at fine granularity, and those cross-references are not expanded into RDF triples in the cybedtools v0.2.0 graph. DigComp 2.2’s Annex examples (the framework’s signature 2022 update) are not extracted as queryable nodes either. Both gaps mean cybedtools’ EU element count understates what those frameworks actually specify.

Reading the ratio as a coverage gap is wrong

US workforce frameworks were designed for US workforce-planning purposes. EU frameworks were designed for EU interoperability and citizen-self-assessment purposes. The 14-to-1 figure is what those design choices look like at the element layer.

What this doesn’t tell us

Element counts aren’t the only metric

Concept depth, real-world adoption, regulatory force, and steward-community size all matter for cross-jurisdictional comparison and none of them appear in this figure.

Global frameworks sit outside this view

SFIA and CSEC2017 don’t fit cleanly into US or EU buckets. SFIA is UK-anchored with global commercial uptake. CSEC2017 is a joint ACM/IEEE/AIS/IFIP product. Both contribute element coverage that resists jurisdictional bucketing.

The cybedtools materialization is partial on the EU side

The figure understates EU specification depth because the e-CF cross-references and DigComp Annex examples aren’t yet extracted.

Reproduce this

The framework_summary tibble ships with the package as a lazy-loaded data object. Element totals are jurisdiction-attributed at the framework level via the jurisdiction column.